AI/ML News

Chip Huyen: Designing Machine Learning Systems

Chip Huyen just came out with a

new book with O'Reilly entitled

Designing Machine Learning Systems.

I'm not going to pontificate here; Chip Huyen wrote it, the reviews are shining,

need I say more?

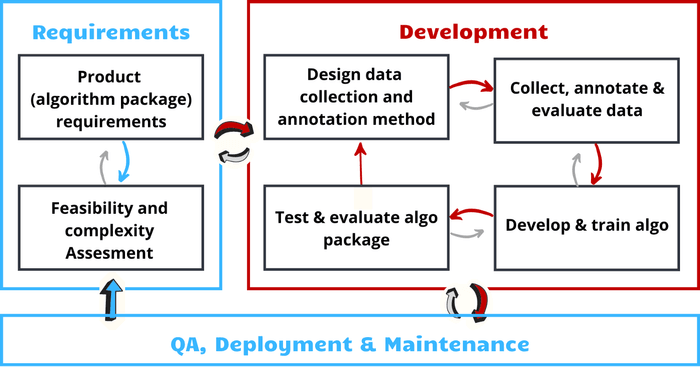

Jenny Abramov: An Agile Framework for AI Projects — Development, QA, Deployment and Maintenance

Jenny Abramov wrote a piece in Toward Data Science with the purpose to present an "iterative-lifecycle framework," that is adapted to AI-centered software. She outlines important considerations as you work through the framework that depends on your use case, data, and business problem.

She suggests using DVC for your larger, more complex datasets and also about the need for reproducibility in experimentation with which DVC can help you (see Technical Product Manager, Dave Berenbaum’s post on experiment versioning.)

In addition, she discusses issues with quality assurance in deployment and the maintenance of the system.

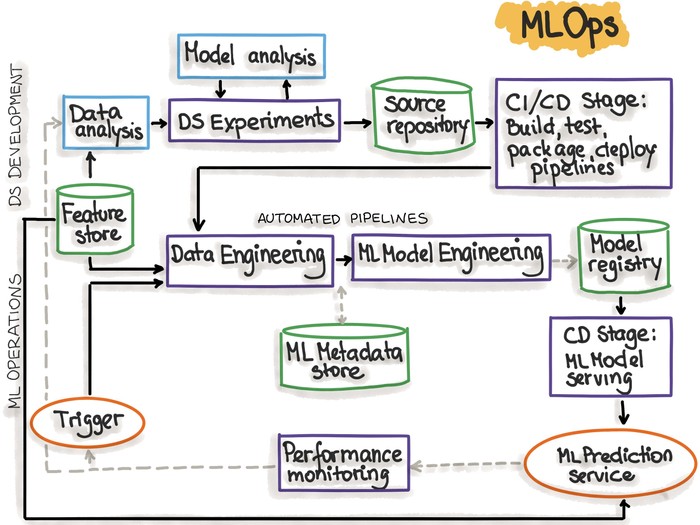

MLOps Guide from INNOQ

Dr. Larysa Visengeriyeva, Anja Kammer, Isabel Bär, Alexander Kniesz, and Michael Plöd of INNOQ (a software development, strategy, and technology consultancy) created this very thorough resource on MLOps, going through all the principles and "iterative-incremental" steps of the process (there's an iterative pattern here 😉). The authors cover Automation, Continuous X (hello CML and TPI), Versioning (hello DVC!), Experiments Tracking (noted DVC here because indeed DVC does experiment tracking too!), Testing, Monitoring, the "ML Test Score" System, Reproducibility, Modularity, ML-based Software Delivery Metrics, and MLOps Principles and Best Practices. Definitely a good resource for for MLOps and filled with more resources as well.

Also interesting from INNOQ is their Artist-in-residence program created because they "believe in the conscious reflection between technology and society" and feel art is well suited for this refection. See the work below by Studio Waltz Binaire based on the question: What traces do we leave behind with technology?

Laszlo Sragner: LinkedIn discussion on Code Quality

Laszlo Sragner a frequent contributor to the MLOps Community in general, often driving discussions and helping others in the MLOps Community Slack channel, posed an interesting point about code quality on LinkedIn. Join the discussion and weigh in at this post:

Company News

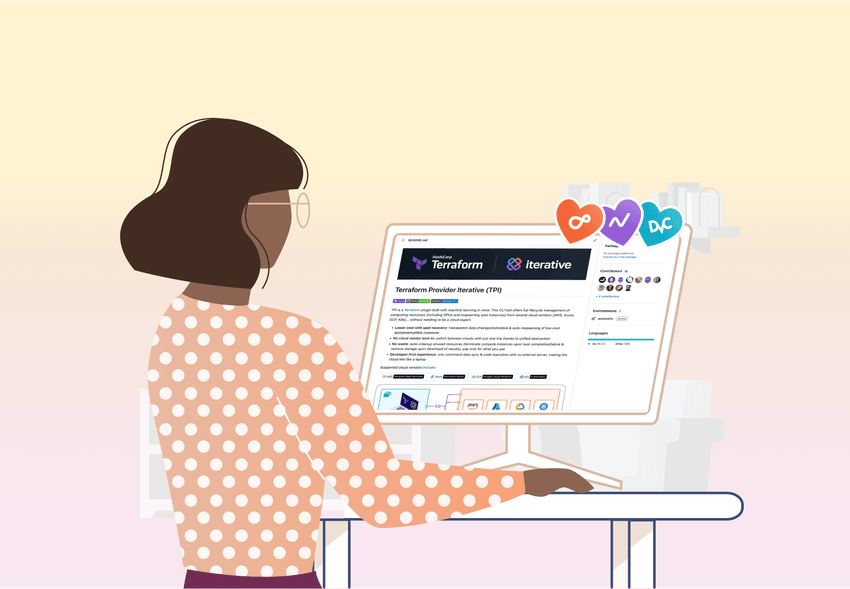

ICYMI: We released TPI! 🎉

On April 27th we released the latest offering to our tool ecosystem.

Terraform Provider Iterative (TPI) is a Terraform plugin built with machine learning in mind. Full lifecycle management of computing resources (including GPUs and respawning spot instances) from several cloud vendors (AWS, Azure, GCP, K8s)… without needing to be a cloud expert.

-

Lower cost with spot recovery: transparent data checkpoint/restore & auto-respawning of low-cost spot/preemptible instances

-

No cloud vendor lock-in: switch between clouds with just one line thanks to unified abstraction

-

No waste: auto-cleanup unused resources (terminate compute instances upon task completion/failure & remove storage upon download of results), pay only for what you use

-

Developer-first experience: one-command data sync & code execution with no external server, making the cloud feel like a laptop

-

⚙️ Read: Moving Local Experiments to the Cloud with Terraform Provider Iterative (TPI) tutorial

Stay tuned for more tutorials and use cases to come!

🚀Mission Impossible - We have a mission statement!

We did it! This year we surveyed the entire team to arrive at a mission statement for Iterative. It was no small feat to decide on what it should be given the early stage of our industry, the variety of our tools, and always a struggle - figuring out the best and most concise way to convey these ideas (you know our penchant for abbreviations). But we persevered and succeeded. Behold Iterative's new mission statement:

We deliver the best developer experience for machine learning teams by creating an ecosystem of open, modular ML tools.

As always the door is open for your feedback on how we can serve your needs better!

ODSC East

We attended our first post-pandemic, in-person conference in Boston last month. It was awesome to be together as a team, see Dmitry Petrov, Milicia McGregor, and Alex Kim in action, and talk to attendees and other vendors at the conference. We are looking forward to MLOps World next month!

✍🏼 Tons of new content on the blog

Our team has been on fire creating content for you. 🔥 Don't miss the following:

- Needing to get started with CML and AWS? Rob de Wit shows you how to train and save your models with CML in a two-part series using a self-hosted AWS EC2 runner and with CML and DVC on a dedicated AWS EC2 runner

- The Part 1, Part 2 and Part 3 tutorials of Alex Kim's End-to-End Computer Vision API project are out and filled with great learning!

- Milecia McGregor brings the monthly roundup of the Community's best technical questions in our latest Community Gems. 💎

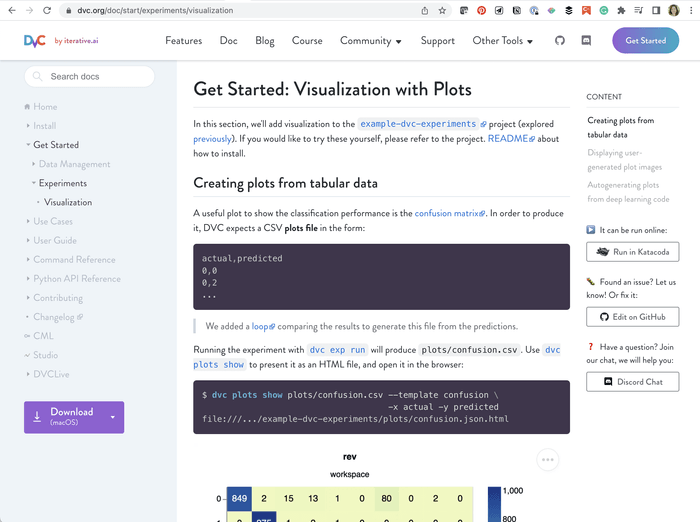

✨ Shiny New Docs

We have a new doc page

showcasing the new visualizations added to the

example-dvc-experiments repo.

Whether you need to create plots from tabular data, user-generated plots, or

autogenerating plots from deep learning code, we've got you covered.

Dmitry Petrov on TFIR about Terraform Provider Iterative (TPI)

Dmitry Petrov recently sat down with Swapnil Bhartiya of TFIR to have a chat about TPI. Learn how to save your team valuable resources in your machine learning projects with Terraform Provider Iterative (TPI). You can watch the recording below.

🥳 Join our Release Party Meetup

We have another tool ready to debut on May 24th. On the 25th we'd love to have you join us for a Release Party Meetup. We will be celebrating the release of the new addition to our open-source tool ecosystem and have a demo of said tool! To join the fun, RSVP to the Meetup and mark your calendar!

New Tool Release Party

New hires

Wolmir Nemitz is our first team member from South America! We're getting closer to covering all the continents on our remote team map! From Brazil, Wolmir joins us as an Engineer for the 🤫 team (you'll find out June 14th). Wolmir has four dogs, two tortoises, and a budgie! 🦜

Pavel Chekmaryov joins us in People Operations, managing the hiring pipeline from Frankfurt, Germany, but soon to be Canada! He has spent the last eight years in startups, most recently at OccurAI, reinventing recruitment in the deep-tech/ML field. We look forward to him helping to grow our amazing team!

Open Positions

Even with our amazing new additions to the team, we're still hiring! Use this link to find details of all the positions and share with anyone you think may be interested! 🚀

Community News

Yet another tool comparison, imagine that!

So each month I tell you about yet another post to help you attempt to make sense of the vast MLOps tool space. Well, this month is no different. I mean you could be new here, right? 🤷🏽♀️ DoltHub tries to bring some clarity with this piece by comparing different data versioning tools and the intricacies of each. You do your research. You know we're partial.

I’m starting to wonder if all Data Science/AI teams need a role with the sole responsibility of the job to keep up to date with all the new tooling and changes/updates to existing tooling in the MLOps space and what might best work for the team. What should this position be called? The best answer wins a DVC t-shirt. See this Twitter thread to answer. (Hint: Funny answers will likely win 😉). Deadline: May 31st. Pass it around…

Andrey Cheptsov: Notebooks and MLOps. Choose One.

Andrey Cheptsov writes a piece pointing out how Jupyter Notebooks, while rightfully loved in data science work, fail pretty miserably in a production environment and the reliance on them can cause bad habits. He notes that he's found:

For any ML model, the time spent in a Jupyter notebook is inversely proportional to its reproducibility. The reasons behind this rule are poor modularity and reusability of the code in notebooks, and poor integration with Git. - Andrey Cheptsov

He advocates for training your models using Python scripts, Git, and CI/CD to automatically shift your foucus to creating reusable, testable code, and to use tools like Gradio and Streamlit to provide the interactivity of Jupyter notebooks. Sounds like a promising idea. 💡

Beyond ML

As noted above in our shiny new mission statement, our focus is to make tools for machine learning teams. It has however come to our attention that more and more users are using our tools for non-ML use cases.

Dror Speiser writes about a non-ML use case in A New Recipe for Idempotent Cloud Deployments in which he provides a tutorial for doing just that with DVC.

The benefits of the approach are:

- Changing one artifact’s code does not force rebuilding other artifacts, even if you’re building on a new VM every time.

- Changing only the deployment script won’t build any artifacts at all.

- You have an artifact repository that just works.

- Your Git history contains the hashes of all built artifacts.

- You can look up any artifact using its hash.

We have opened up a #beyond-ml channel in our Discord Server. Do stop by and chat about alternate uses for our tools!

Upcoming Events

- 📣 Our next in-person conference will be MLOps World from June 7-10 in Toronto! We look forward to seeing Community members there!

- 📣 PyLadies Berlin is hosting Doreen, a data scientist working at Opinary, who will be presenting "Reproducible Machine Learning with DVC and Poetry" on May 17th. Join the event here.

- 📣 Nicolás Eiras will be presenting "Data Versioning: Towards Reproducibility in Machine Learning" at Embedded Vision Summit on May 18th in Santa Clara, California.

- 📣 Montreal PyData will host a Meetup on June 16th with two presentations, "Introduction to Trustworthy Machine Learning for the Enterprise" by Mohamed Leila, ServiceNow and "ML in production in the video game industry: Ubisoft's use case" by Jean-Michel Daignan, Ubisoft

Other Fun Stuff

- New Awesome list

- New Udemy Course including DVC (But don't forget our online course!)

- Would you like to get some good practice in? Join this Kaggle competition created by Jean-Michel Daignan based on a previous competition from Petfinder.my with some really cute pet images.

Tweet Love ❤️

We love it when our Community does conference talks on our tools! 🥰

The @EmbVisionSummit starts on Monday and our team is on its way!🚀

— Tryolabs (@tryolabs) May 13, 2022

We’ve had our fair share of experience on edge devices. Nicolás and our CTO @dekked_ will be there; come by to chat about our experiences.

Also, don't miss Nico's talk! May 18th - 2:05pm https://t.co/MfnEtOT29Y pic.twitter.com/r9itWhVjis

This Heartbeat was brought to you by the song "Tarkus" from Emerson, Lake, and Palmer which can be found on our MLOps Playlist, and the letters T, P, and I. 😉 See you next month!

Have something great to say about our tools? We'd love to hear it! Head to this page to record or write a Testimonial! Join our Wall of Love ❤️

Do you have any use case questions or need support? Join us in Discord!

Head to the DVC Forum to discuss your ideas and best practices.