Welcome to the July Heartbeat, our monthly roundup of new releases, talks, great articles, and upcoming events in the DVC community.

News

DVC 1.0 release

On June 22, DVC entered a new era: the official release of version 1.0. After several weeks of bug-catching with our pre-release, the team has issued DVC 1.0 for the public! Now when you install DVC through your package manager of choice, you'll get the latest version. Welcome to the future.

To recap, DVC 1.0 has some big new features like:

- Plots powered by Vega-Lite so you can compare metrics across commits

- New and easier pipeline configuration files- edit your DVC pipeline like a text file!

- Optimizations for data transfer speed

Read all the release notes for more, and stop by our Discord if you need support migrating (don't worry, 1.0 is backwards compatible).

Virtual meetup!

In May, we had our first every virtual meetup. We had amazing talks from Dean Pleban and Elizabeth Hutton, plus time for Q&A with the DVC team- you can watch the recording if you missed it!

On Thursday, July 30, we're hosting our second meetup! Ambassador Marcel Ribeiro-Dantas is hosting once again. We'll have short talks about causal modeling and CI/CD, plus lots of time for chatting and catching up. Please RSVP!

July DVC Meetup: Data Science & DevOps!

This meetup will be hosted by DVC Ambassador Marcel! AGENDA:We have two 10-minute talks on the agenda:- Causal Modeling with DVC - Marcel- Continuous integration for ML case studies - Elle Following talks, we'll have Q&A with the DVC team and time for community discussion.

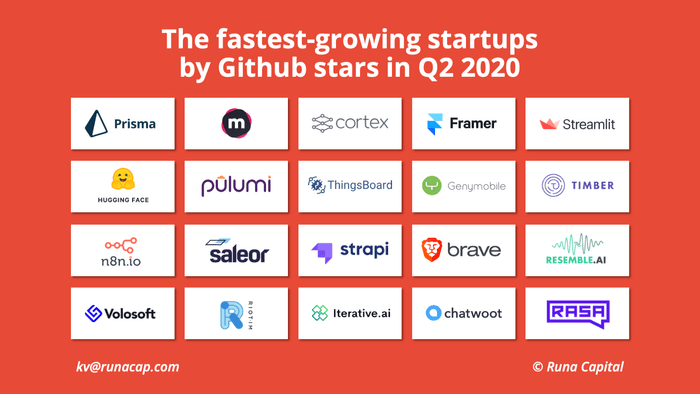

DVC is in the top 20 fastest-growing open source startups

Konstantin Vinogradov at Runa Capital used the GitHub API to identify the fastest growing public repositories on GitHub in terms of stars and forks. He used these metrics to estimate the top 20 fastest growing startups in open source software. And guess what, DVC made the cut! We're in great company.

New team member

We have a new teammate-Maxim Shmakov, previously of Yandex, is joining us! Maxim is a front-end engineer joining us from Moscow. Please welcome him to DVC. 👋

Community activity

We've been busy! Although we are mostly homebound these days, there has been no shortage of speaking engagements. Here's a recap.

Meetings and talks

- Co-founders Dmitry and Ivan appeared on the HasGeek TV series Making Data Science Work to discuss engineering for data science with hosts Venkata Pingali and Indrayudh Ghoshal. The livestream is available for viewing on YouTube!

- Dmitry appeared on the MLOps.community meetup to chat with host Demetrios Brinkmann. They talked about the open source ecosystem, the difference between tools and platforms, and what it means to codify data.

- I (Elle) gave a talk at the MLOps Production & Engineering World meeting, called "Adapting continuous integration and continuous delivery for ML". I shared an approach to using GitHub Actions with ML projects. Video coming soon!

Elle O'Brien is currently explaining the adaptation of continuous integration and continuous delivery for ML at #MLOPS2020!

— Toronto Machine Learning Society (TMLS) (@TMLS_TO) June 18, 2020

From explaining DVC to providing great examples - a very interesting talk with @andronovhopf taking place right now! pic.twitter.com/dJjuLb0k4F

- Extremely early the next morning, clinician-scientist Cris Lanting and I co-led a workshop about developing strong computational infrastructure and practices in research as part of the Virtual Conference on Computational Audiology. We talked about big ideas for making scientific research reproducible, manageable, and shareable. For the curious, the workshop is still viewable!

- DVC has a virtual poster at SciPy 2020! We prepared a demo about packaging models and datasets like software so they can be widely disseminated via GitHub.

Good reads

Some excellent reading recommendations from the community:

- Data scientist Déborah Mesquita published a thorough guide to using new DVC 1.0 pipelines in a sample ML project. It's truly complete, covering data collection to model evaluation, with detailed code examples. If you are new to pipelines, do not miss this!

The ultimate guide to building maintainable Machine Learning pipelines using DVC

- Caleb Kaiser of Cortex (another startup in the Runa Capital's Top 20 list!) shared a thinkpiece about challenges from software engineering that can inform production ML. We really agree with what he has to say about reproducibility:

You typically hear about “reproducibility” in reference to ML research, particularly when a paper doesn’t include enough information to recreate the experiment. However, reproducibility also comes up a lot in production ML. Think of it this way — you’re on a team staffed with data scientists and engineers, and you’re all responsible for an image classification API. The data scientists are constantly trying new techniques and architectural tweaks to improve the model’s baseline performance, while at the same time, the model is constantly being retrained on new data. Looking over the APIs performance, you see one moment a week ago where the model’s performance dropped significantly. What caused that drop? Without knowing exactly how the model was trained, and on what data, it’s impossible to know for sure.

What software engineers can bring to machine learning

- Mukul Sood wrote about the Real World, a place beyond Jupyter notebooks where data is non-stationary and servers are unreliable! He covers some very real challenges for taking a data science project into production and introduces the need for CI/CD practices in healthy, scalable ML applications.

Scaling Machine Learning in the Real World

A nice tweet

We'll close on a nice tweet from Russell Jurney:

I have to say I am blown out of the water by @DVCorg

— Russell Jurney 🇺🇦 (@rjurney) May 30, 2020

DVC is incredibly powerful. Right now we’re just versioning input/output datasets in DVC against S3, but even this is incredibly useful and so much better than trying Git LFS (ugh) or manual archiving.https://t.co/5bf5VJuPaE

Thanks, we couldn't do it without our community! As always, thanks for joining us and reading. There are lots of ways to stay in touch and we always love to hear from you. Follow us on Twitter, join our Discord server, or leave a blog comment. Until next time! 😎